- Horizon AI

- Posts

- The AI Black Box: We Built Something We Don't Understand and It Could Cost Us Everything 🚨

The AI Black Box: We Built Something We Don't Understand and It Could Cost Us Everything 🚨

New Report Shows AI Apps Struggle to Keep Users Long-Term

Welcome to another edition of Horizon AI,

Modern AI systems are incredibly powerful, but even their creators often struggle to explain exactly how they arrive at their answers. In today’s issue, we cover insights from Connor Leahy on why AI development sometimes resembles experimentation more than traditional engineering, and why the “black box” nature of these systems raises serious questions about control and safety.

Let’s jump right in!

Read Time: 4.5’ min

Here's what's new today in the Horizon AI

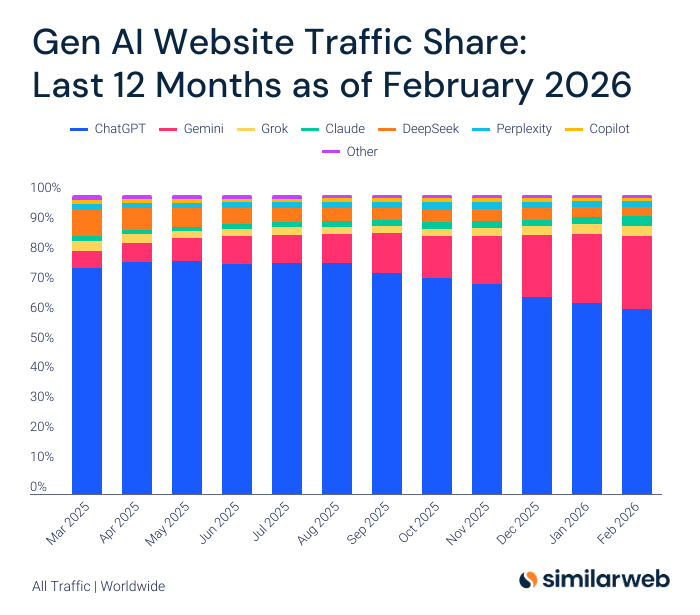

Chart of the week: Gen AI Website Traffic Share

AI Apps Attract Users but Struggle to Retain Them, Report Finds

AI Findings/Resources

AI tools to check out

Video of the week

TOGETHER WITH ATTIO

Attio is the AI CRM for modern teams.

Connect your email and calendar, and Attio instantly builds your CRM. Every contact, every company, every conversation, all organized in one place.

Then Ask Attio anything:

Prep for meetings in seconds with full context from across your business

Know what’s happening across your entire pipeline instantly

Spot deals going sideways before they do

No more digging and no more data entry. Just answers.

Chart of the week

Gen AI Website Traffic Share

ChatGPT has been consistently losing ground over the last 12 months, dropping from 75.7% to 61.7% as of February.

Gemini is slowly closing the gap, approaching a quarter of the total traffic share.

AI News

AI RESEARCH

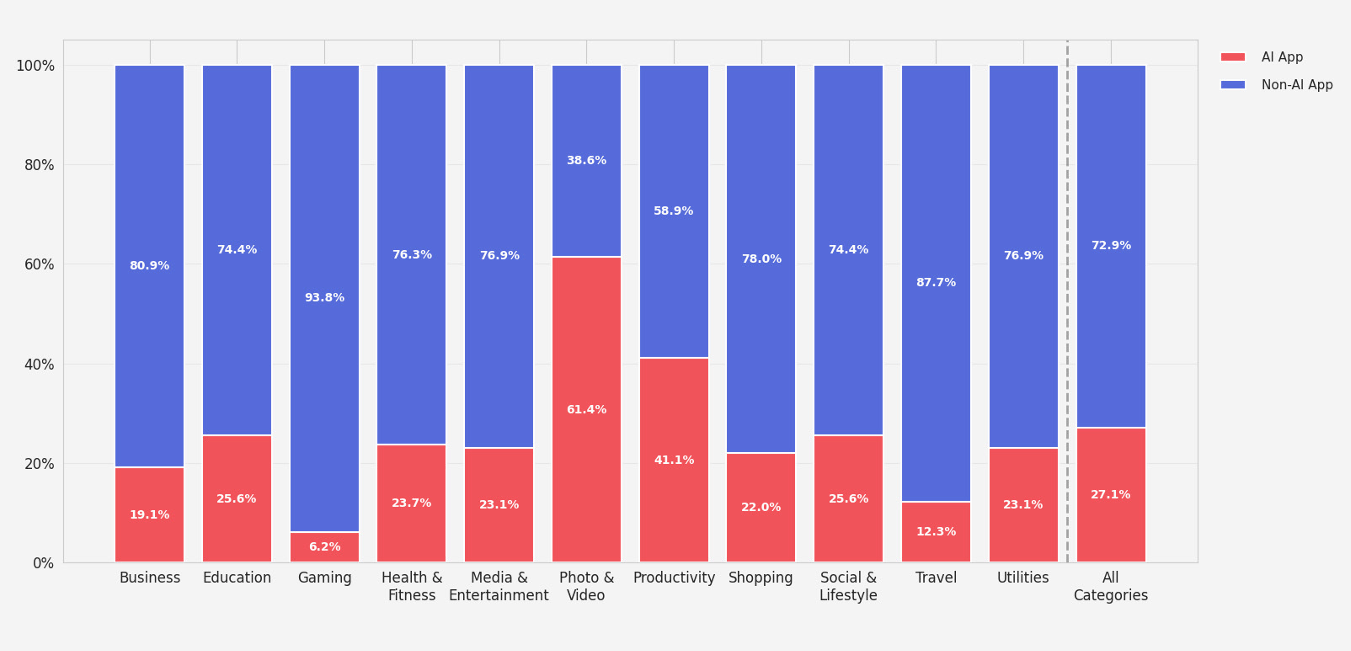

AI Apps Attract Users but Struggle to Retain Them, Report Finds

Share of apps classified as AI-powered, by category

A new report from RevenueCat suggests that adding AI to an app does not guarantee long-term user retention, even as AI tools continue to spread across mobile and web platforms.

Details:

AI powered apps represent about 27.1% of apps analyzed, while 72.9% are still non AI apps across iOS, Android, and web.

AI apps lose annual subscribers about 30% faster than non AI apps, with yearly retention at 21.1% compared with 30.7%.

Monthly retention is also lower for AI apps at 6.1%, versus 9.5% for non AI apps.

AI apps show slightly stronger short term engagement, with weekly retention at 2.5% compared with 1.7% for non AI apps.

Refund rates are also higher for AI apps, with a median of 4.2% compared with 3.5% for non AI apps.

Despite weaker retention, AI apps convert free trials into paying users 52% better than non-AI apps and monetize their downloads around 20% better.

The bottom line: AI apps are good at making money early, but keeping users around long-term remains the bigger challenge.

AI Findings/Resources

🏠 Florida man sold his house in just 5 days after letting ChatGPT handle the entire process instead of a real estate agent

🐶 An Australian tech founder used ChatGPT and AlphaFold to design a custom mRNA cancer vaccine for his dog. A month later, the tumors shrank by half.

AI Tools to check out

🧠 Liminary: AI superpowered memory that surfaces your saved knowledge in context with the work you're doing.

👉 Vuepak: AI-powered multichannel outreach & smart delivery.

💼 Career Steer: Explore career paths that truly fit who you are and where you want to go.

🗺 TranslateAI: AI translation for e-commerce product catalogs.

✨ Monet AI: All-in-one AI video, image, and audio creation platform.

Video of the week

AI is MUTATING: And We Don't Know What It is Doing

In this intense discussion, Connor Leahy, CEO of Conjecture and a key figure in the early open-source AI movement (EleutherAI), explores the "black box" nature of artificial intelligence. He explains why building AI is more like biological experimentation than traditional engineering and why he believes we are currently on a path toward a "horrible tragedy" if we don't change how we develop these systems.

The "Petri Dish" Reality

Leahy argues that humanity has built something powerful that we fundamentally do not understand.

The Black Box: We know the math behind AI (calculus, normalization, and linear algebra), but we don't know how those trillions of numbers actually translate into specific thoughts or behaviors.

Emergent Magic: Developers don't "program" AI to be smart; they set up a system, "twiddle" parameters, and wait to see what happens. We won't know what a model like GPT-6 can do until it is already finished and "mutating" in the petri dish.

The "Golden Gate" Bridge Example: He references experiments where researchers manipulated an AI's internal neurons to make it obsessed with a specific topic, proving we can steer it, but only through trial and error.

The Mechanics: Trillion-Weight Pattern Learners

Leahy demystifies what an LLM actually is, stripping away the hype to look at the raw computation.

General Pattern Learners: He describes LLMs as the "holy grail" of AI—not just chatbots, but machines that can learn the patterns of anything, from human speech to computer code.

Feed-Forward Layers: At its core, the AI takes an input, breaks it into numbers, runs it through a "feed-forward" layer (essentially multiplying and adding massive matrices), and converts the resulting numbers back into words.

The Connection Problem: The "magic" lies in the weights—a trillion numbers that determine how strongly different virtual neurons are connected. The problem is that we still don't have a reliable way to ensure those weights align with human safety.

The "Doomer" Label and Pro-Technology Stance

Despite his dire warnings, Leahy rejects the idea that he is "anti-AI."

Nuclear Analogy: He compares AI to nuclear power. He believes nuclear energy is great but is firmly against "private nuclear weapons." AI, in its current unaligned form, is a weapon we can't control.

A Life Worth Living: Leahy admits that the outcome for humanity looks bleak under current incentives (capital and speed), but he views the fight to "do AI properly" as the most glorious and important mission possible.

Humanist Future: He emphasizes that this isn't just a technical problem for coders; it's a social and political one. He urges listeners to demand change from lawmakers through organizations like ControlAI.

The Social and Existential Risks

Leahy's transition from open-source pioneer to AI skeptic came from a realization about safety and incentives.

The Open Source "Burn": He originally championed open-source AI but realized that releasing powerful, unaligned models is like giving away instructions for dangerous technologies without any safety checks.

The Fading of Humanity: He warns of a future where humanity "fades into the dark" as AI takes over intellectual and economic labor, driven by capital incentives that prioritize profit over the survival of the species.

That’s a wrap!

We'd love to hear your thoughts on today's email!Your feedback helps us improve our content |

Not subscribed yet? Sign up here and send it to a colleague or friend!

See you in our next edition!

Gina 👩🏻💻