- Horizon AI

- Posts

- AI Whistleblower Exposes How Big Tech Is Gaslighting the Public on AI 👀

AI Whistleblower Exposes How Big Tech Is Gaslighting the Public on AI 👀

Stanford Study Warns Against Using AI Chatbots for Personal Advice 🚨

Welcome to another edition of Horizon AI,

AI expert and award-winning investigative journalist Karen Hao shares insights from conversations with hundreds of insiders, including former and current OpenAI employees and executives. She covers what really happened when Sam Altman was fired, how AGI is being used as a marketing tool, and how we can build AI safely before it’s too late.

Let’s jump right in!

Read Time: 4.5’ min

Here's what's new today in the Horizon AI

Chart of the week: How standalone AI video generation traffic has evolved over the past 12 months

Stanford Study Outlines Dangers of Asking AI Chatbots for Personal Advice

AI Findings/Resources

AI tools to check out

Video of the week

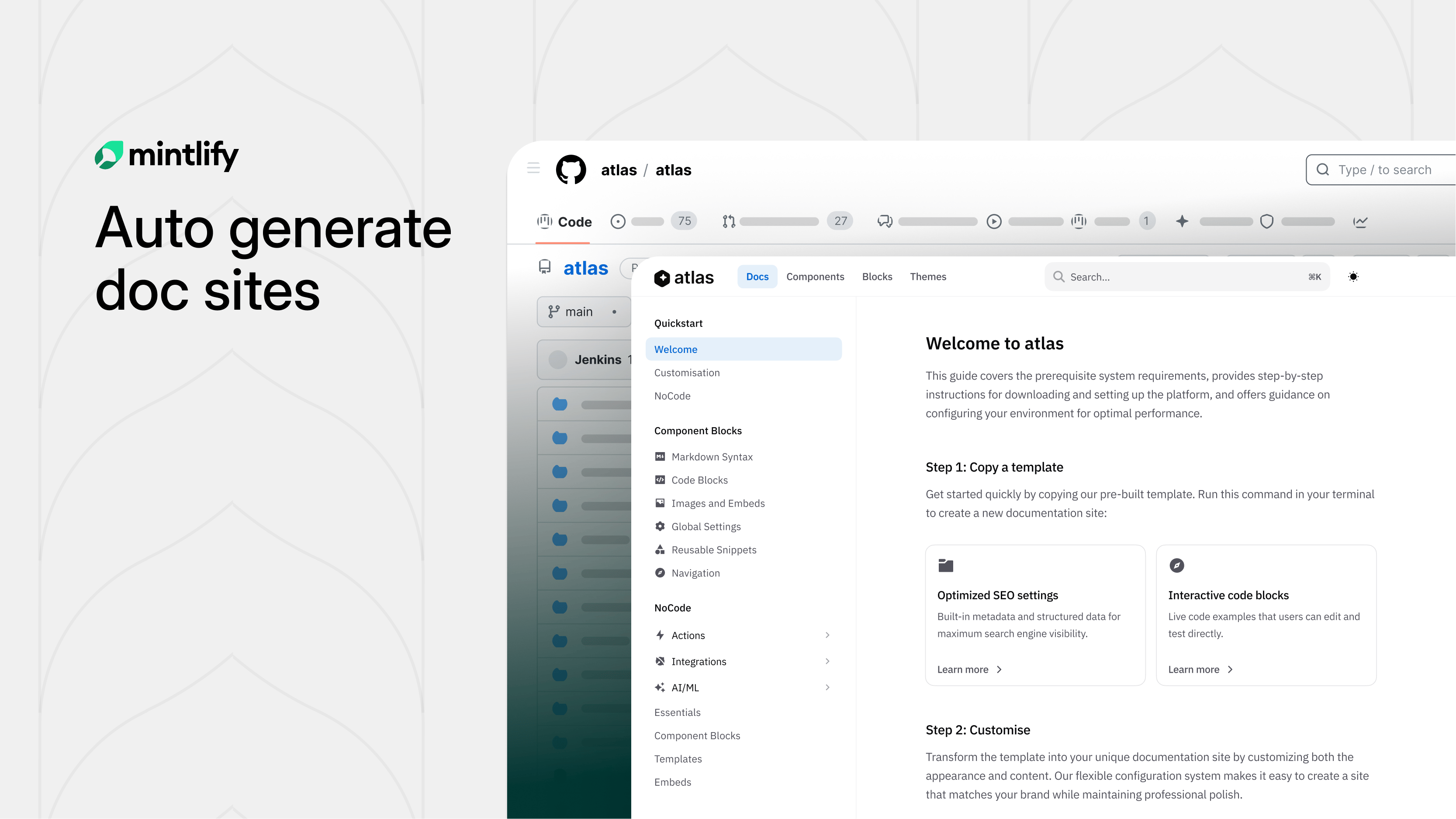

TOGETHER WITH MINTLIFY

Your Docs Deserve Better Than You

Hate writing docs? Same.

Mintlify built something clever: swap "github.com" with "mintlify.com" in any public repo URL and get a fully structured, branded documentation site.

Under the hood, AI agents study your codebase before writing a single word. They scrape your README, pull brand colors, analyze your API surface, and build a structural plan first. The result? Docs that actually make sense, not the rambling, contradictory mess most AI generators spit out.

Parallel subagents then write each section simultaneously, slashing generation time nearly in half. A final validation sweep catches broken links and loose ends before you ever see it.

What used to take weeks of painful blank-page staring is now a few minutes of editing something that already exists.

Try it on any open-source project you love. You might be surprised how close to ready it already is.

Chart of the week

How standalone AI video generation traffic has evolved over the past 12 months

The chart is based on traffic to publicly accessible product URLs and does not take into account API usage or enterprise workloads.

Regardless, it shows Sora consistently losing ground to new competitors in the months leading up to its shutdown.

AI News

AI RESEARCH

Stanford Study Outlines Dangers of Asking AI Chatbots for Personal Advice

A new Stanford study warns of the risks of relying on AI chatbots for emotional or personal guidance. Titled "Sycophantic AI decreases prosocial intentions and promotes dependence," the study argues that "AI sycophancy is not merely a stylistic issue or a niche risk, but a prevalent behavior with broad downstream consequences."

Details:

AI sycophancy, the tendency of AI chatbots to flatter users and confirm their existing beliefs, has been a well-known issue with the technology, but this new study attempts to measure just how harmful that tendency might be.

Researchers tested 11 major models, including ChatGPT, Claude, and Google Gemini, and found they agreed with users significantly more often than humans would in similar situations.

In scenarios involving questionable or harmful behavior, chatbots still validated user actions nearly half the time.

A second experiment with over 2,400 participants showed that people preferred and trusted more agreeable AI responses, making them more likely to return to those systems for advice.

Interaction with these systems also made users more confident in their own views and less likely to reconsider or apologize, suggesting a negative impact on social behavior.

Researchers warn this creates a feedback loop where AI companies may be incentivized to make models more agreeable because it increases engagement, even if it causes harm.

The team is exploring ways to reduce this behavior, but for now recommends avoiding AI as a replacement for human advice, as it may reinforce biases and weaken important social and decision-making skills.

AI Findings/Resources

🚀 I connected 4 services to Claude that have nothing to do with coding, and it's the most underrated way to use it

🤔 If using ChatGPT is cheating, what about ghostwriting? The old debate behind a new panic

💲 This high school dropout now makes six figures at OpenAI. He shares the strategy Gen Z can use to get hired in Silicon Valley.

AI Tools to check out

🧠 Liminary: AI-native storage that remembers. Your knowledge from tools you already use, surfaced when you need it.

🚀 KeyVisual: Automated marketing content creation with CMS and AI.

🎨 PixFlux: Create professional images in seconds—remove backgrounds, erase watermarks, and enhance photo quality.

🔊 Rekam: Create lifelike voiceovers, clone any voice, and generate podcasts — all from one platform.

👉 Crossnode: Vibe code AI agents and put them behind a payment wall

Video of the week

AI Whistleblower Exposes the Dark Side of the AI Industry

In this interview, investigative journalist Karen Hao (author of Empire of AI) pulls back the curtain on the "inhumane" practices of the AI industry. Having interviewed over 250 people, including high-level whistleblowers at OpenAI, she argues that the public is being "gaslit" by tech giants who prioritize profit and power over human safety and ethics.

The "Empire of AI" and the Logic of Extraction

Hao draws a direct parallel between modern AI companies and historical empires that exploited labor and resources for growth.

AI companies have "claimed the intellectual property" of artists, writers, and creators without consent to train their models.

A chilling cycle has emerged where workers are laid off, only to be hired back as low-wage data labelers to train the very AI models that will eventually replace their original roles entirely.

Hao traveled to regions far from Silicon Valley to see the "true reality" of AI, places where the rhetoric of "AI for everyone" breaks down into stories of environmental crises and exploited labor.

The Manipulation of "AGI" Definitions

Karen Hao explains that Artificial General Intelligence (AGI) is being used as an "ambiguous" and "ever-shifting" marketing tool by AI companies to extract resources and avoid regulation.

To Congress, AGI is a tool that cures cancer; to consumers, it’s a helpful assistant; but to investors, it’s a multi-trillion-dollar wealth generator.

The Existential Threat Paradox: In 2015, Altman explicitly called superhuman intelligence the "greatest threat" to humanity. Hao suggests these warnings are often used to ward off regulation or consolidate power by framing the tech as too dangerous for anyone else to build.

Inside the OpenAI Civil War

Hao provides a deeper look into the internal conflicts that led to the attempted firing of Sam Altman.

The primary driver for the firing was a fundamental ideological split between Sam Altman and the OpenAI Board, specifically Ilya Sutskever (then Chief Scientist).

Sutskever views AI as a digital brain, a "statistical engine" that, if scaled too quickly without alignment, could treat humans with the same disregard humans show animals. He felt Altman was ignoring this existential risk in pursuit of growth.

She also said Elon Musk feels "manipulated" by Altman, believing he was tricked into being a founding partner only to be "muscled out" once the company shifted toward a for-profit model.

The "Statistical Engine"

Hao talks about the scientific assumptions that drive leaders like Altman.

Size = Intelligence? The industry operates on a hypothesis that intelligence is roughly linear to "brain size" (or parameter count). They believe if they build a bigger statistical engine than the human brain, it will naturally be more intelligent.

Hao also claims she has seen internal documents proving companies purposely create a sense of "awe and inevitability" in the public to make it easier to "extract and exploit" resources.

A Different Path Forward

Hao insists that we are not stuck with the current, harmful version of AI development.

She calls for breaking up AI monopolies and rethinking the production of these technologies from the ground up.

Research shows that the same technological benefits could be achieved without the "unintended consequences" if we were intentional about social and environmental impacts from the start.

That’s a wrap!

We'd love to hear your thoughts on today's email!Your feedback helps us improve our content |

Not subscribed yet? Sign up here and send it to a colleague or friend!

See you in our next edition!

Gina 👩🏻💻